Knuth’s “Why Pi” Talk at Stanford: Part 1

A few weeks ago I had another opportunity to meet a giant in my field: Donald Knuth was giving his annual Christmas Tree lecture at Stanford, where I happened to be near at the time. The most exciting part was probably right at the very beginning, when Knuth announced that, for the first (“and probably only”) time, he had finished two books on that same day: The Art of Computer Programming: Volume 4A, and Selected Papers on Fun and Games, with the pre-order links on Amazon going up the same day.

The topic of this year’s lecture was, according to the site:

Mathematicians have known for almost 300 years that the value of n! (the product of the first n positive integers) is approximately equal to

, where

is the ratio of the circumference of a circle to its diameter. That’s “Stirling’s approximation.” But it hasn’t been clear why there is any connection whatever between circles and factorials; the appearance of

has seemed to be purely coincidental, one of the amazing accidents of nature. Recently however Johan Wästlund has found a nice explanation of this phenomenon, and the reason turns out to be connected with the theory of trees.

I found the lecture originally hard to follow; although Knuth is now 74 years old and physically growing quite frail, his mind was still evidently much sharper than the audience’s; he was completely prepared for every question regarding extensions and corner cases of the material he was presenting. I considered the notes I took to be somewhat useless since I had spent so much time just trying to follow his reasoning. However, when the video became available, I had no excuse left, so I went through it again armed with a pen and the pause button, and I now have a set of notes somewhat worthy of posting here for anyone who missed it. Any errors or inconsistencies are almost definitely my fault and not his.

The notes themselves are after the jump.

There are many combinatorial interpretations of the Catalan numbers, though for the purpose of fitting in with the theme of the lecture, he focused on their correspondence with the number of ordered binary forests onnodes (although he could just have easily have focused on their more commonly known correspondence with the full binary trees on

nodes). Knuth then demonstrated (numerically) the approximation given by

and therefore there is some connection between trees and, which he spends most of the talk trying to tease out.

The idea, he says, came from Johan Wästlund‘s article An Elementary Proof of the Wallis Product Formula for pi in the American Mathematical Monthly, Dec. 2007, 914-917. Johan had been searching for ideas for problems for the Swedish Math Olympiad, and came across a problem from Paul Halmos’ Problems for Mathematicians Young and Old. From his description and the snippets from the book’s Amazon page, I’m fairly certain it’s problem 7A on page 53, which reads:

Is it possible to load a pair of dice so that the probability of occurrence of every sum from 2 to 12 shall be the same?

It turns out that this is, in fact, not possible. Knuth says that the proof Halmos gives in this book is complicated and non-elementary (though I can’t check for myself since the Amazon preview sadly doesn’t go that far), but Wästlund wanted to see how a high school student taking the Swedish Math Olympiad might approach it. (It also seems he made the simplifying assumption that the two dice are identical).

Letbe the probability that each weighted die comes up

. Then the probability of 2 coming up when rolling a pair of them can only be

, which must equal the probability of every other sum, so we obtain

He noticed an interesting pattern to these coefficients, namely:

In order to obtain a more general form for these coefficients, let’s say. Then consider the generating function

. Squaring it gives us

However, those coefficients were all chosen to equal, so that’s really the same as

, which is the infinite series

. Taking the square root to get back to

, we get

Sincewas the generating function for

, we can conclude that

. So, for example,

Since we’re talking about probability here, of course we want, as well as

. To compute this (and generalizations of this) more easily, we observe that

where. But since

and

, the above generating function is just

so by the binomial theorem again, it equals:

. Therefore:

So it turns out thehave just as nice a pattern as the

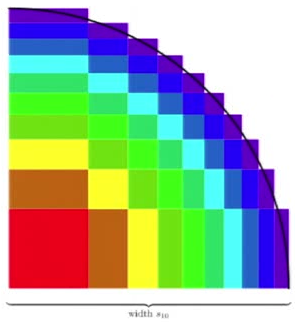

! In fact, if you plot some rectangles on the plane with borders at each of the

(therefore with a total width and height of

), you get a remarkable picture:

I think this is the point where most of us began to see where the talk was going; since the big red block in the bottom-left corner represents, it has an area of 1, and every other color has to add up to the same sum. Since there’s ten such colors, the total area of this region is 10. However, it’s quite obviously also approximating a quarter-circle of radius

! Indeed, if we could prove that the circle will always only intersect the outermost layer of blocks, then we could bound the error of this approximation with

So here’s the “cute proof”[2] that the above holds.

Notice that. Similarly,

since. From the formula,

so by applying the two inequalities from before, we arrive at the important inequality:

Now, the inner notches of the shape in Figure 1 occur at coordinates which we want to show is inside the circle. That is, we want to show

. Using the formula above we know

So,

A similar trick using the other inequality works for the outer notches as well, so we have justified the earlier bound onin completely elementary terms. This shows how

is related to

. Now we just have to turn this into factorials. Recall that

, and that

. Since we have just finished proving that

we know that

, so:

And there we have it: a simple link between factorials and the circle. It turns out that you can play the same game with any other “super-ellipse” of the form

of which the circle is only one special case (). You just have to choose a different set of coefficients:

Following the same sort of logic (claims Knuth) will allow you to approximate the area of the corresponding super-ellipse, which is

Notice thatand

, which shows what we’ve really been approximating all along!

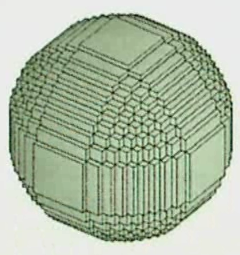

The method apparently even works in three dimensions, just by calculating the coefficients for three dice rather than two. He provided the following image to whet our appetites.

The rest of the talk focused on another combinatorial interpretation ofwhich has applications to coding theory, and a relatively uninteresting Q&A session that nevertheless did have one gem in it: a problem relating

to random mappings which Knuth cannot figure out. I’ll write in detail about both of these things in future blog posts, though, because this one has already become prohibitively large. I hope someone finds it interesting 🙂

[1]: Actually, he consistently wrotethroughout the whole talk, but the correct value is really

.

[2]: His words, not mine!

awesome! thanks! I wasn’t there, but I am surprised that nobody nitpicked the 16/48 mistake 🙂

Alessandro

January 2, 2011 at 7:19 pm

Yeah, I wonder if I had pointed it out if it would’ve gotten me one of those six free books 🙂

Adrian Petrescu

January 2, 2011 at 7:31 pm

Buna – vroiam sa iti spun ca, in calitatea unui adolescent care spera sa studieze comp sci in viitor, te admir si iti urez succes in proiectele tale 🙂

vladh

May 5, 2011 at 5:03 pm

Why is it that a 2D random walk is recurrent, while a 3D random walk is transient?…

Very nice answer, Alon! One quick note about the ‘naturalness’ of [math]\sqrt{n}[/math] in Stirling’s Approximation. There is a paper by Feller (“A Direct Proof of Stirling’s Formula”, Am. Math. Monthly, 1967) that gives us some intuition for th…

Quora

October 21, 2012 at 7:06 pm

[…] Stirling’s Approximation […]

Metric Learning: Some Quantum Statistical Mechanics | Machine Learning

November 14, 2013 at 5:03 pm

Adrian, You have done an excellent job. Since you have transcripted both part 1 and part 2 of Prof Knuth’s lectures, with mistakes corrected, how about getting all his mathematical lectures (in case you have done this to his other math videos as well) published in a book form with his permission? A great opportunity of doing something with such an eminent person.

C.Rama Murthy

January 30, 2015 at 4:06 am